Can We Store AI Memories in a 200km Fiber Loop? The 2026 Answer to the Memory Wall

Key points

To bypass the 2026 AI memory bottleneck, John Carmack proposed a 200km fiber-optic loop as a secondary cache, using light's propagation delay to store 32+ GB of model weights in flight, achieving extreme bandwidth but facing engineering challenges in signal recirculation.

Key takeaway

John Carmack's 2026 proposal for a photonic delay-line cache is a paradigm-shifting, theoretically sound response to the AI memory crisis. It converts storage into a high-bandwidth transmission challenge, leveraging mature telecom tech to achieve unparalleled bandwidth (32+ TB/s) and power efficiency by circumventing the economic and physical limits of HBM. Its viability is uniquely tied to the deterministic weight-streaming pattern of LLM inference, allowing latency hiding. While critical engineering hurdles in signal integrity (noise accumulation) and thermal stability persist, the concept successfully redirects industry focus toward optical solutions and hybrid architectures, accelerating the adoption of Co-Packaged Optics. More than a cache, it embodies a hardware philosophy for temporal, streaming AGI, charting a course toward scaling intelligence with light itself.

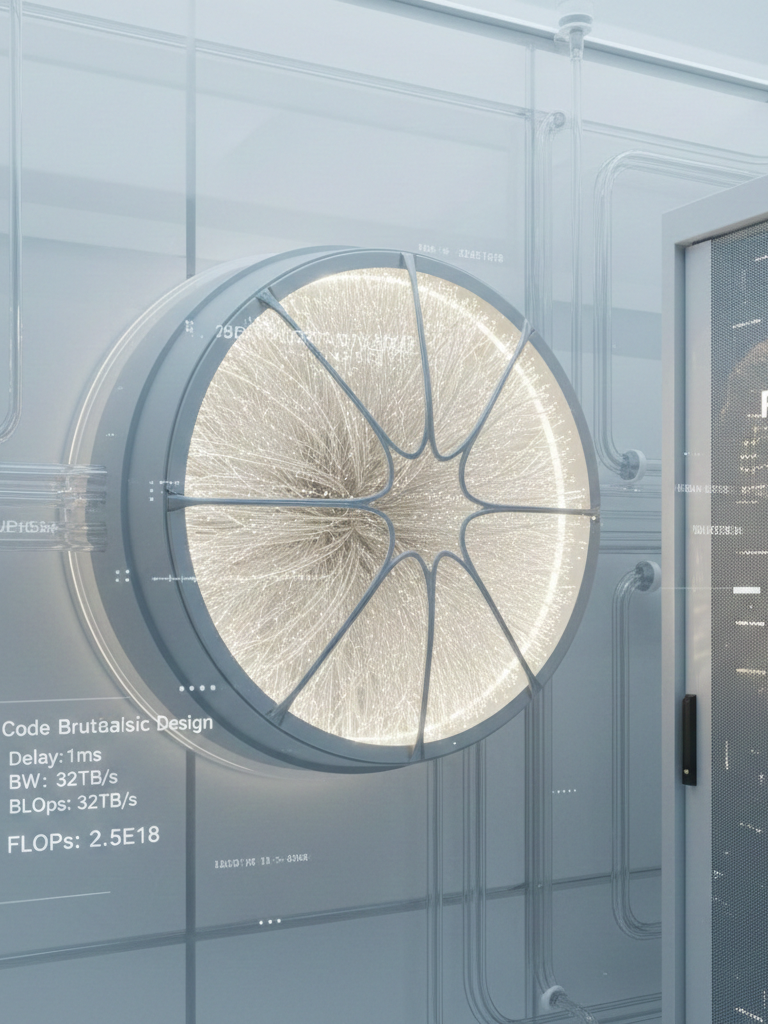

Can We Store AI Memories in a 200km Fiber Loop? The 2026 Answer to the Memory Wall

The technological landscape of 2026 is dominated by a critical contradiction: AI accelerator compute scales exponentially, but the memory systems feeding them have hit a physical and economic wall. In February 2026, John Carmack proposed a radical solution—using a 200-kilometer fiber-optic line as a high-bandwidth secondary cache. By leveraging the propagation delay of light, massive AI model weights would circulate continuously at petabit speeds, aiming to eliminate the latency and energy overhead of traditional DRAM and HBM.

The genesis is the catastrophic global memory crisis of early 2026. Data centers are projected to consume over 70% of all high-end memory chips, driven by trillion-parameter models like GPT-5. HBM3e and HBM4 are prohibitively expensive and thermally limited. Carmack noted that 256 Tb/s data rates are proven over 200km of fiber, suggesting a path to bypass the silicon bottleneck.

The concept revives the mid-20th-century principle of delay-line memory. Modern silica fiber replaces old acoustic mediums. Light travels at ~204,191 km/s in fiber, so a 200km loop provides about 1 millisecond of propagation delay. This is manageable for the streaming, predictable access of AI weights.

The core physics is capacity C = bandwidth B × delay Δt. At 256 Tb/s over a 1ms loop, C = 32 Gigabytes. This yields an effective bandwidth of 32 TB/s, far exceeding the 4.8 TB/s of an NVIDIA H200's HBM3e. The 1ms latency can be hidden via pipelining because AI inference accesses weights in a strict, deterministic sequence.

Recent optical breakthroughs amplify potential. A April 2025 record demonstrated 1.02 Petabits per second over 1,808km using a 19-core fiber. Applied to a 200km delay line, this could store ~127.5 GB in flight.

Engineering Challenges

The engineering challenges are significant.

- Signal Integrity: A 200km loop suffers ~40 dB loss, requiring Erbium-Doped Fiber Amplifiers which introduce noise. Maintaining bit-integrity over millions of recirculations requires advanced Forward Error Correction.

- Thermal Stability: Fiber delay changes with temperature, potentially requiring stability within 0.002°C or a flexible timing system.

Feasibility & Workload Analysis

The proposal's feasibility is uniquely tied to AI workload patterns. Weights are accessed sequentially, making them ideal for streaming. Dynamic data like KV Caches are not. This informs a hybrid architecture:

| Workload Component | Access Pattern | Suited for Fiber Cache? |

|---|---|---|

| Model Weights | Deterministic, Sequential | Yes |

| KV Cache | Dynamic, Non-sequential | No (Requires HBM/CXL) |

| Input Embeddings | Predictable | Yes |

| Activation Buffers | Transient, Random | No |

Physical & Economic Reality

- Physical Form Factor: 200km of fiber can be spooled into a ~7-10 liter volume (basketball-sized), fitting in a server rack.

- Cost: In early 2026, 200km of single-mode fiber costs ~$320, decoupling cost from silicon lithography.

- Power Efficiency: A photonic cache consumes ~50-60W (mainly for EDFAs), while DRAM refresh for a 1TB model can waste ~100W.

The Convergence Enabler & Industry Alternatives

The convergence enabler is Co-Packaged Optics, as seen in NVIDIA's 2026 Rubin platform, which mandates optical I/O and provides a native interface for a delay-line port.

Industry alternatives exist for nearer-term scaling:

- Carmack also suggested ganging cheap flash memory for bandwidth (e.g., FlashGNN protocols).

- Compute Express Link 4.0 provides another path for memory pooling.

A comparison highlights their different niches:

| Feature | CXL Memory Pool | Fiber Delay Line |

|---|---|---|

| Protocol | PCIe-based (Load/Store) | Streaming (Serial) |

| Best For | Random access / KV Cache | Model Weights |

| Latency | 200-500 ns | 1 ms (Loop cycle) |

| Scalability | Rack-wide sharing | Massive bandwidth density |

Summary

In summary, the 200km fiber cache is a compelling pathfinder. Its theoretical advantages for AI are clear, but practical deployment hinges on solving recirculation noise and thermal control. It reframes the memory wall as a transmission challenge, accelerating the shift toward optical computing and hybrid memory architectures for the era of AGI.

Frequently Asked Questions

Qany questions?

Please read the article carefully. If you have any questions, please contact [email protected].

Audio synthesized by Entity-Echo AI Agent